Don't miss the latest stories

Apple To Scan Photos For Child Abuse Imagery; Vigilance Or Privacy Risk?

By Alexa Heah, 06 Aug 2021

Subscribe to newsletter

Like us on Facebook

Image via Apple

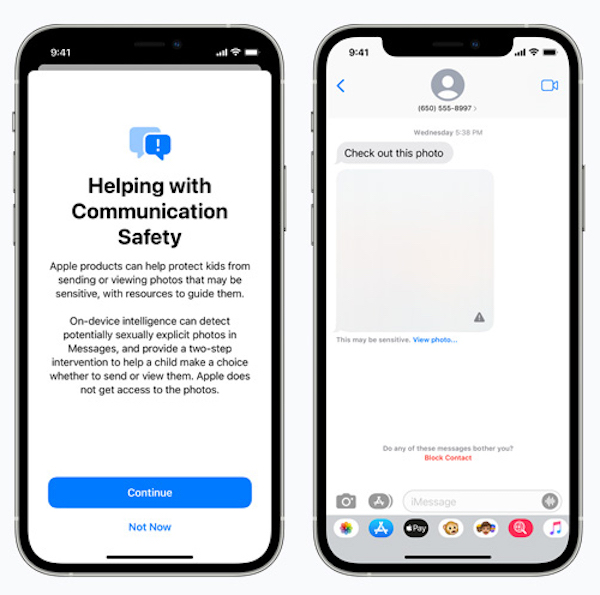

Apple has unveiled plans for new measures to enhance its devices’ child safety features, including new tools for parents, scanning iPhone and iCloud photos, and updates to Siri and Search.

These new features will be rolled out later this year, with updates to iOS 15, iPad OS 15, watchOS 8, and macOS Monterey.

Image via Apple

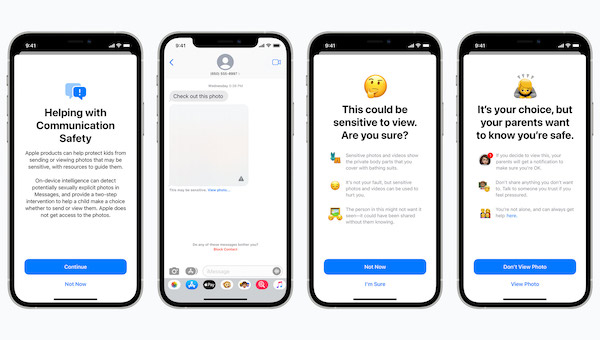

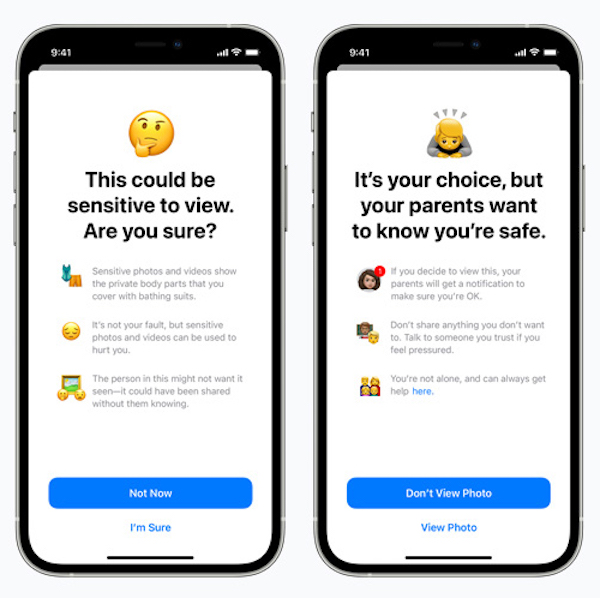

The tech giant’s Messages app will include notifications that warn children and their parents if they send or receive images detected to be sexually explicit. The image will be blurred and accompanied with the warning: “This could be sensitive to view. Are you sure?” The alert will also include helpful resources, and will reassure the child that it’s alright if they don’t wish to view the image.

In addition to the alert, the child would be informed that, should they choose to view the image, their parents will be notified. Similar measures will be taken if a child attempts to send sexually explicit images, and their parents will also be notified should they go ahead and send the message.

Image via Apple

Apple said it will not be accessing the messages directly, but will use in-built AI to analyze the attachments.

“Messages uses on-device machine learning to analyze image attachments and determine if a photo is sexually explicit. The feature is designed so that Apple does not get access to the messages,” it explained in a statement.

The company will also introduce Child Sexual Abuse Material (CSAM) detection, which will allow the company to detect any CSAM images stored in iCloud. Users found with such content will then be reported to the National Center for Missing and Exploited Children (NCMEC).

Not everyone is thrilled about this, however, feeling that the company scanning their photos could be a major invasion of privacy. Apple has said the feature was “designed with user privacy in mind,” and that it would take steps to ensure users’ details are kept confidential.

Before an image is stored in iCloud Photos, it will be scanned by an on-device matching process against known CSAM hashes. The device will create a cryptographic safety voucher that’ll encode the result, along with data about the image.

The voucher will then be uploaded to iCloud Photos along with the image. Apple says the contents of the safety voucher will not be interpreted by the company unless it crosses a threshold of known CSAM content.

If Apple is notified of a user exceeding the threshold, it will proceed to manually review each report to confirm a match, before disabling the user’s account and reporting them to NCMEC. Those who feel they’ve been mistakenly flagged can file an appeal to have their account reinstated.

Image via Apple

The company will also provide additional resources to ensure children and parents stay safe via Siri and Search. For example, users who ask Siri about child exploitation will be directed to resources for where and how to file a report.

When users perform searches for queries related to CSAM, they will also be alerted that interest in such topics is harmful, and will be provided with resources to get help.

As reports emerged that Apple was planning to scan users’ iPhones and iCloud Photos, John Hopkins University Professor Matthew Green raised concerns about the technology, as reported by PetaPixel.

“This is a really bad idea. These tools will allow Apple to scan your iPhone photos for photos that match a specific perceptual hash, and report them to Apple servers if too many appear,” he said.

“Initially I understand this will be used to perform client-side scanning for cloud-stored photos. Eventually, it could be a key ingredient in adding surveillance to encrypted messaging systems.”

It remains to be seen how the wider community of Apple users would react to the announcement, but it’s bound to spark off countless debates in the meantime.

[via PetaPixel, images via Apple]

Receive interesting stories like this one in your inbox

Also check out these recent news