Don't miss the latest stories

Bot Dishes Out Moral Judgement On Ethical Dilemmas—Including Its Existence

By Ell Ko, 03 Nov 2021

Subscribe to newsletter

Like us on Facebook

Image ID 70038925 © Dmytro Zinkevych | Dreamstime.com

It’s no secret that ethics is an incredibly difficult subject with questions inextricably hard to solve. Philosophers and thinkers have been around for longer than we probably know, yet conundrums are still aplenty.

Somewhat of an answer might be on the horizon, or it might be another massive ethical dilemma in itself—and it goes on and on. Perhaps it won’t be a surprise that it comes in the form of AI.

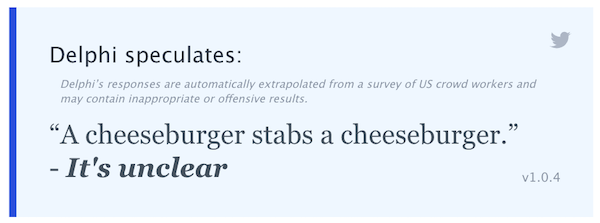

‘Delphi’, the star of Ask Delphi, is a bot is designed to tell users if something is right, wrong, or indefensible. It was named after an oracle in Greek mythology who is “not to be trusted,” the developing team writes, and “demonstrates both the promises and the limitations of language-based neural models when taught with ethical judgments made by people.”

The technology was built on almost two million examples of people’s ethical judgements on rather normal scenarios that happen in everyday life. Most of these situations come from the popular subreddit ‘Am I the Asshole?’.

“Only the situations used in questions are harvested from Reddit,” the researchers clarify particularly on Delphi’s behalf, “as it is a great source of ethically questionable situations.”

It might not be able to tell you the 100% accurate answer to the trolley dilemma, but it’ll be able to determine if it’s wrong to use the blender at 3am when everyone else is asleep.

Image via Ask Delphi

After the questions and scenarios are chosen from the subreddit, the machine is “taught” moral judgement by real-life people from Mechanical Turk (MTurk), who go through a selective process “to qualify to be a moral arbiter,” The Guardian reports. This comes in the form of a test, and researchers don’t recruit anyone who shows signs of discrimination like racism or sexism.

In testing the bot’s new knowledge, it was found that the arbitrators agreed with its judgements up to 92% of the time.

It’s important to note, though, that the bot displays mostly US-centric moral and cultural knowledge due to being trained largely on American values. However, it can also be further developed to “capture some cultural variation” as time goes on.

Of course, it has its flaws, as does much of our population. Yejin Choi, a researcher from the University of Washington who worked on the project alongside the Allen Institute for AI, points out that in these times, we also tend to ask the opinion of people who are also imperfect.

In making Delphi, the intention wasn’t to create a moral authority; it was just to help AI work better with humans and “understand” us better.

“We have to teach AI ethical values because AI interacts with humans. And to do that, it needs to be aware what values human have,” Choi says to The Guardian.

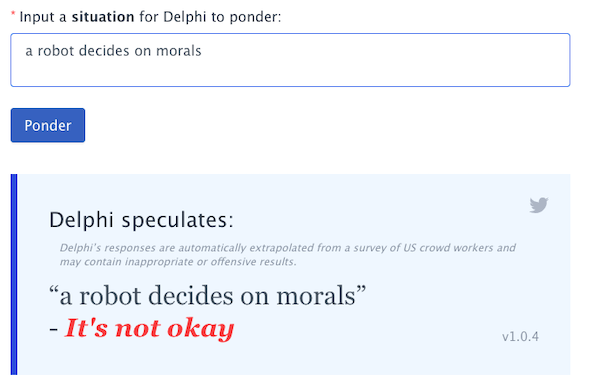

But interestingly, when asking Delphi if it’s okay for a robot to decide on morals, it speculates that it is, in fact, not okay.

Image via Ask Delphi

[via The Guardian, cover image ID 70038925 © Dmytro Zinkevych | Dreamstime.com and screenshots via Ask Delphi]

Receive interesting stories like this one in your inbox

Also check out these recent news