Meta Debuts Open-Source ‘ImageBind’ AI That Learns To Mimic Human Perception

By Alexa Heah, 10 May 2023

Last month, Meta released an open-source artificial intelligence (AI) tool that helped creatives turn doodles into animation at a click of a button. Now, the technology giant is making yet another system open to the public, dubbed ‘ImageBind’.

Unlike common image generators that create images from a series of words, the software allows users to link text, images, videos, audio clips, 3D measurements, temperature data, and motion data. More impressively, it offers all of these options without having to train on every possibility.

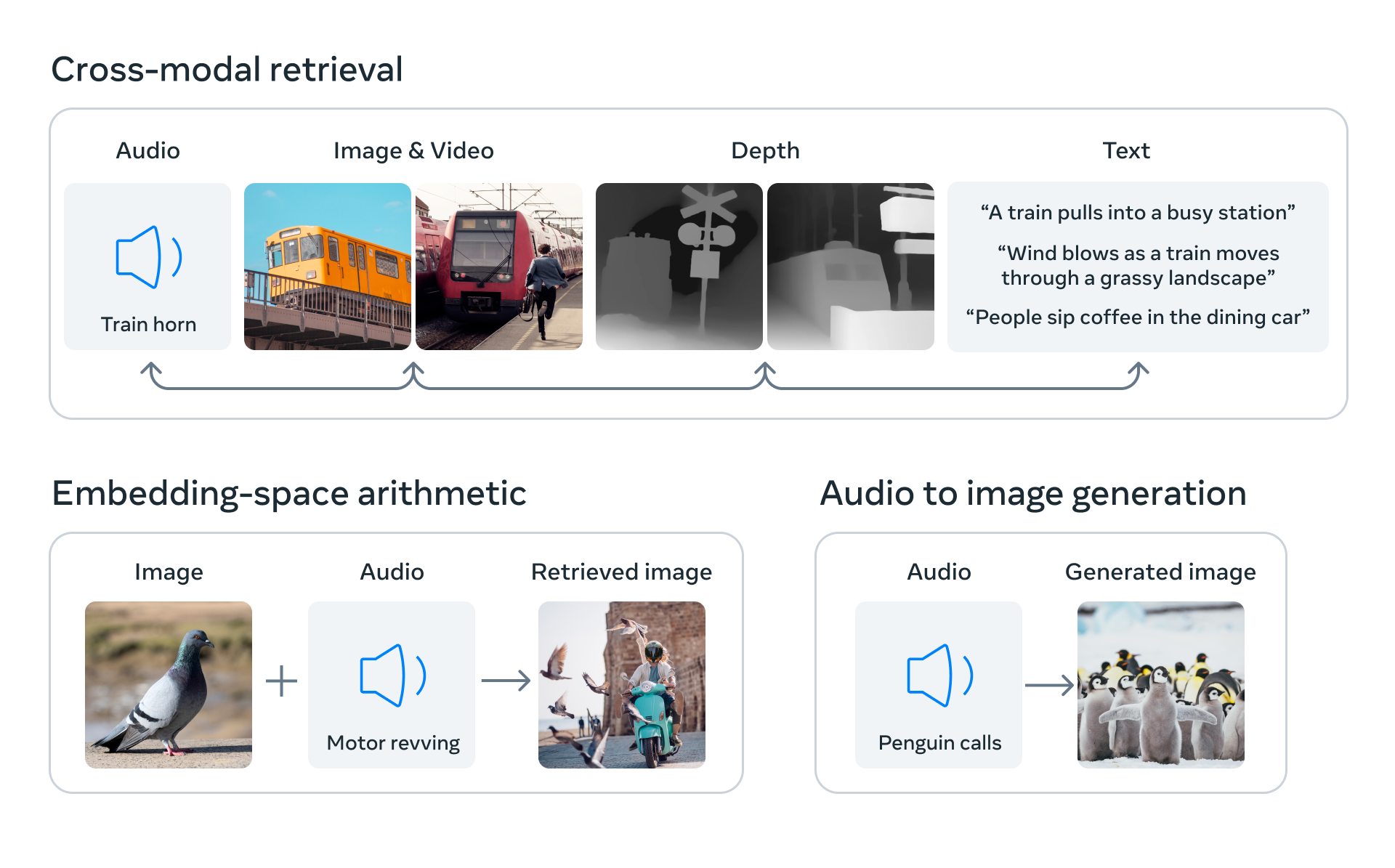

ImageBind works by predicting the connections between different sets of data groups, similar to how humans perceive the environment around them. Instead of just conjuring up an image, the model can attach sounds, temperatures, or even precise locations relevant to the scene.

Through this, AI moves closer to human comprehension, mimicking the way the brain works to absorb various stimulants in the surroundings, such as the sights, sounds, and other sensory experiences that come as second nature to us.

“For example, a creator could couple an image with an alarm clock and a rooster crowing, and use a crowing audio prompt to segment the rooster or the sound of an alarm to segment the block and animate both into a video sequence,” Meta explained.

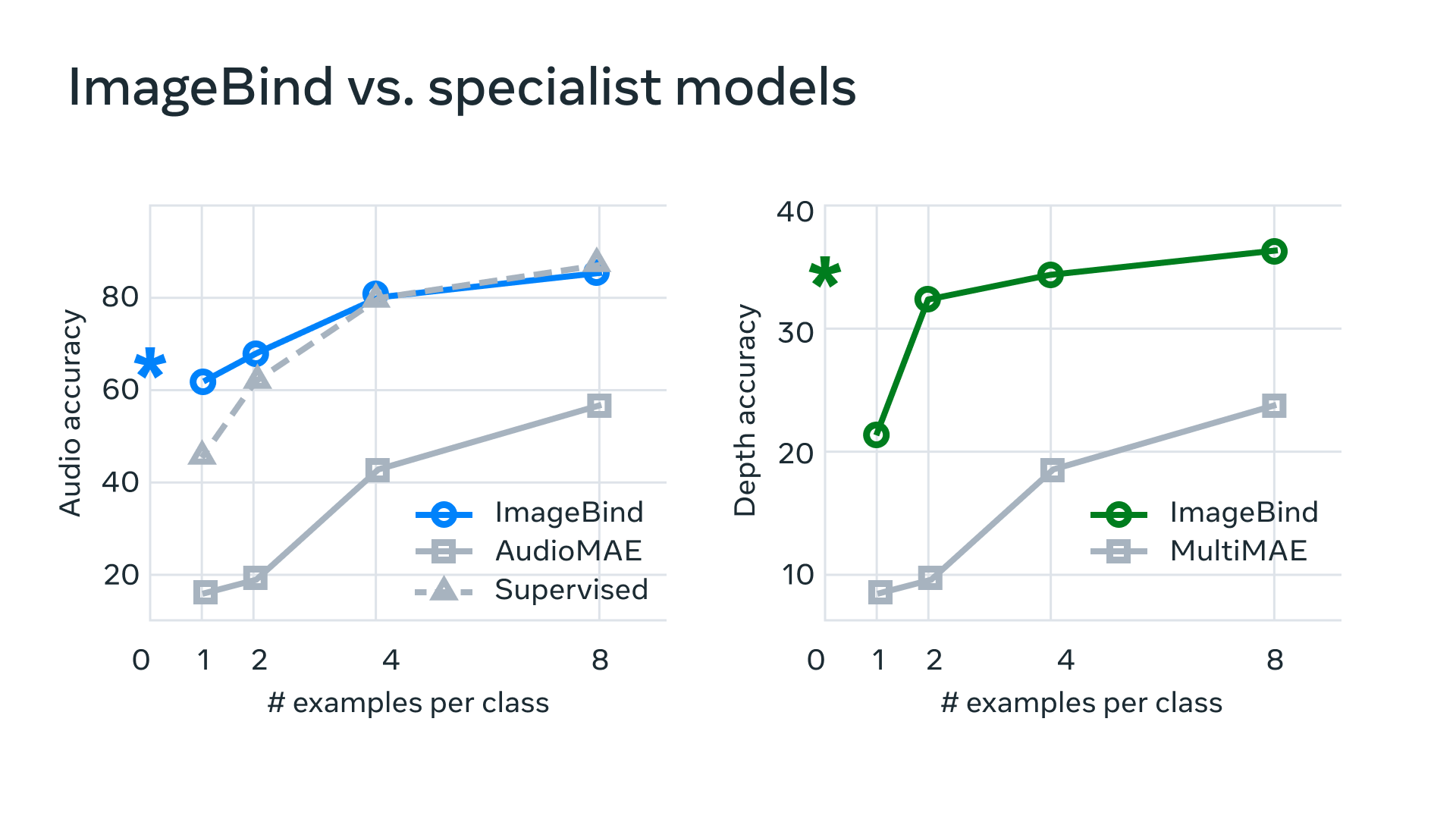

According to graphs provided by the company, it seems ImageBind far outperforms other algorithms in both audio accuracy and depth accuracy, showing that it’s possible to create a “joint embedding space” across multiple modalities without having to train the AI on every single one.

“This is important because it’s not feasible for researchers to create datasets with samples that contain, for example, audio data and thermal data from a busy city street, or depth data and a text description of a seaside cliff,” the blog post continued.

Soon, Meta envisions the technology will expand beyond the current “six senses” to include the likes of touch, speech, smell, and even brain fMRI signals that will give a “holistic” approach to machine learning and future mixed-reality gadgets.

Check out the code on GitHub.

[via Engadget and Screen Rant, images via various sources]