Don't miss the latest stories

Apple Breaks Down How It Would Properly Detect Photos For Child Abuse

By Mikelle Leow, 16 Aug 2021

Subscribe to newsletter

Like us on Facebook

Image via TungCheung / Shutterstock.com

Apple, as you may have heard, will be automatically scanning photos on devices—including those on iCloud—for Child Sexual Abuse Material (CSAM) as part of a slew of child safety features arriving later this year. The company hopes the measure would protect children from predatory abusers and place parents in “a more informed role in helping their children navigate communication online.”

While the decision is likely well-intentioned, users have brought up how the feature could border towards surveillance and an invasion of privacy. Apple was also less clear in its explanation of how the technology works. In hindsight, it acknowledges how “confusion” might have arisen from the announcement, and it has now updated its document outlining how CSAM will be scanned from photos.

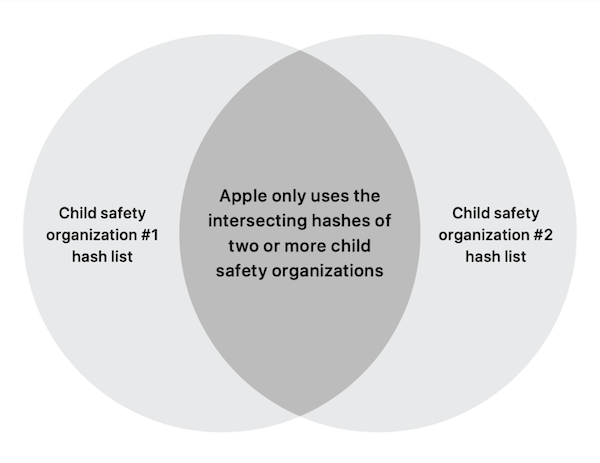

The tech giant now stresses that it will rely on more than one government-affiliated database to scan for abusive imagery. These organizations will be tied to separate jurisdictions—meaning that they aren’t controlled by the same government—to prevent the risk of a government slipping unrelated content to be censored for political reasons.

And since potentially exploitative controls from a single government wouldn’t match the CSAM hashes of another database, users would not have unrelated photos flagged.

Image via Apple

Further detailing the technology with the Wall Street Journal, Apple’s senior software engineering vice president Craig Federighi shared that the company is in discussions with additional child protection agencies to keep the feature in check, with the United States’ National Center for Missing and Exploited Children (NCMEC) currently being the only group named in the project.

In addition, Apple’s paper elaborates that photos flagged by its algorithm will undergo a second human visual review to ensure the images meet all hashes. Only then would an account be disabled and a report would be filed to a child protection organization. Apple will also only flag iCloud accounts with 30 or more CSAM images—a “drastic safety margin” to weed out false positives.

The technology’s threshold might change over time as the system develops, Federighi confirmed. In time, Apple will also display a full list of child safety hashes that auditors rely on when assessing the photos to offer more transparency to users.

[via PetaPixel, images via various sources]

Receive interesting stories like this one in your inbox

Also check out these recent news