Nvidia Builds ‘3D MoMa’ Tool For Artists To ‘Improvise’ IRL Objects Into 3D

By Mikelle Leow, 22 Jun 2022

Image courtesy of Nvidia

When an idea hits you, your instinct might be to pen it down so you can flesh it out later. In 3D modeling, though, this idea could take hours and even days to realize.

Naturally, that puts a damper on the creative process, but graphics processing technology firm Nvidia has now made huge strides in enabling instant gratification when you convert real-world objects into flexible objects for 3D applications.

The company has created the Nvidia 3D MoMa, a technology that can breathe life into photos and turn them into 3D objects. It’s similar to the inverse-rendering system that Nvidia presented back in March—only this time, it’s focused on simplifying life for creators, allowing them to construct 3D images as quick as it takes to hold a jam session.

A jam- what? Yeah. The company imagines 3D MoMa to be what jazz is to the music world, where improvisation is everything. This means that the tool is built to create depth and dimension to images whenever inspiration strikes.

The technique “could empower architects, designers, concept artists and game developers to quickly import an object into a graphics engine to start working with it, modifying scale, changing the material or experimenting with different lighting effects,” Nvidia explains to us.

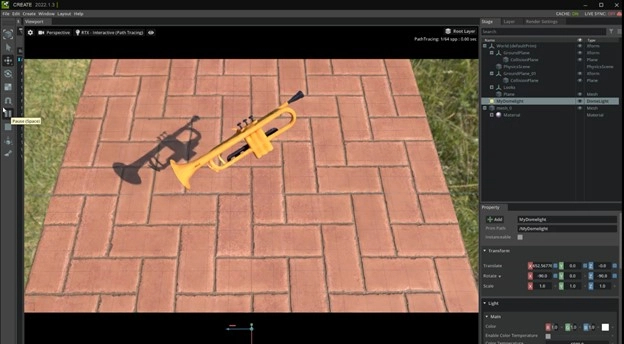

To illustrate, the company collected about 100 images spanning multiple angles of five jazz band instruments—a trumpet, trombone, saxophone, drum set, and clarinet—and replicated them in 3D.

Video via Nvidia

The technology is optimized for the workflow of artists and engineers such that 3D objects can be dropped into game engines, 3D modelers, and film renderers seamlessly. For that to happen, the objects would have to adhere to a universal format.

Image via Nvidia

The universal skeleton for 3D models is known as a triangle mesh (pictured below), which Nvidia likens to “a papier-mâché model of a 3D shape built from triangles.”

Image via Nvidia

After being imported, the models can be easily overlaid with 2D textures—Nvidia demonstrated this by turning a “plastic” trumpet object into gold, marble, wood, or cork. Talk about the Midas touch.

Impressively, the firm says the AI can predict how the objects are lit and allows creators to tweak the lighting too.

The system, outlined in a new paper, will be presented at the Conference on Computer Vision and Pattern Recognition this week.

While you can’t test the tech for yourself yet, you can watch how its magic unfold in the video below.

[via Nvidia]